A bunch of news about Computer vision, Computer Graphics, GPGPU or the mix of the three....

Friday, January 28, 2011

Ati Stream becomes AMD Accelerated Parallel Processing (APP) SDK

"AMD has renamed its wellknown OpenCL SDK, called ATI Stream SDK, in AMD APP SDK. APP stands for Accelerated Parallel Processing."

What is AMD APP Technology?AMD APP technology is a set of advanced hardware and software technologies that enable AMD graphics processing cores (GPU), working in concert with the system’s x86 cores (CPU), to accelerate many applications beyond just graphics. This enables better balanced platforms capable of running demanding computing tasks faster than ever, and sets software developers on the path to optimize for AMD Accelerated Processing Units (APUs).What is the AMD APP Software Development Kit?The AMD APP Software Development Kit (SDK) is a complete development platform created by AMD to allow you to quickly and easily develop applications accelerated by AMD APP technology. The SDK allows you to develop your applications in a high-level language, OpenCL™ (Open Computing Language).What is OpenCL™?OpenCL™ is the first truly open and royalty-free programming standard for general-purpose computations on heterogeneous systems. OpenCL™ allows programmers to preserve their expensive source code investment and easily target both multi-core CPUs and the latest GPUs, such as those from AMD.Developed in an open standards committee with representatives from major industry vendors, OpenCL™ gives users what they have been demanding: a cross-vendor, non-proprietary solution for accelerating their applications on their CPU and GPU cores.To learn more, see the OpenCL Zone.To get the AMD APP SDK with OpenCL Support, download here.

Source : Ozone3D and AMD

Thursday, January 27, 2011

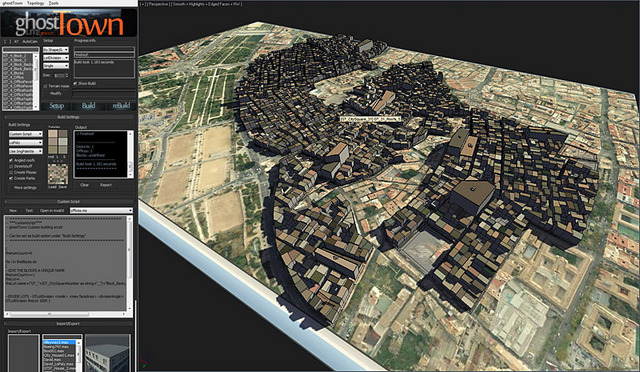

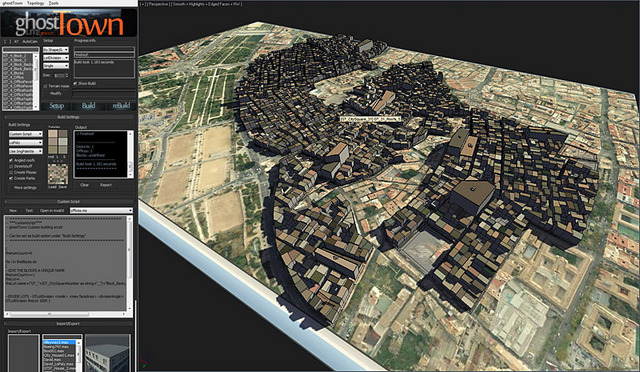

City generator

goshtTown is a plugin for 3dsMax.

It allows to :

- Create cities..,

- With High or low poly,

- create road from given path,

- have a own material system,

- support basic scripting to create "facade"...

In the future it must support "editable scripts" and a support of OpenStreetMap in order to get back the road layer of existing maps !

ghostTown 0.21 Lite from kila D on Vimeo.

The GhostTown forum

It allows to :

- Create cities..,

- With High or low poly,

- create road from given path,

- have a own material system,

- support basic scripting to create "facade"...

In the future it must support "editable scripts" and a support of OpenStreetMap in order to get back the road layer of existing maps !

ghostTown 0.21 Lite from kila D on Vimeo.

The GhostTown forum

Wednesday, January 26, 2011

AMD OpenCL University Kit

"AMD presents the OpenCL University Kit, a set of materials for teaching a full semester course in OpenCL programming. Each lecture includes instructor notes and speaker notes, plus code examples."

http://developer.amd.com/

Students only need basic knowledge of C/C++ programming to understand the materials in this course, and those students with basic knowledge of OpenCL programming can start with Lecture 5.A C/C++ compiler and an OpenCL implementation (such as the AMDAPP SDK)are needed to complete the exercises.

Lecture 1: Introduction to Parallel Computing

Lecture 2: Introduction to OpenCL

Lecture 3: Introduction to OpenCL, continued

Lecture 4: GPU Architecture

Lecture 5: OpenCL Buffers and Complete Examples

Lecture 6: Understanding GPU Memory

Lecture 7: GPU Threads and Scheduling

Lecture 8: Optimizing Performance

Lecture 9: OpenCL Programming and Optimization Case Study

Lecture 10: OpenCL Extensions

Lecture 11: Events Timing and Profiling

Lecture 12: Debugging

Lecture 13: Programming Multiple Devices

http://developer.amd.com/

Students only need basic knowledge of C/C++ programming to understand the materials in this course, and those students with basic knowledge of OpenCL programming can start with Lecture 5.A C/C++ compiler and an OpenCL implementation (such as the AMDAPP SDK)are needed to complete the exercises.

Lecture 1: Introduction to Parallel Computing

Lecture 2: Introduction to OpenCL

Lecture 3: Introduction to OpenCL, continued

Lecture 4: GPU Architecture

Lecture 5: OpenCL Buffers and Complete Examples

Lecture 6: Understanding GPU Memory

Lecture 7: GPU Threads and Scheduling

Lecture 8: Optimizing Performance

Lecture 9: OpenCL Programming and Optimization Case Study

Lecture 10: OpenCL Extensions

Lecture 11: Events Timing and Profiling

Lecture 12: Debugging

Lecture 13: Programming Multiple Devices

Intel Labs RGB-Depth section

RGB-D: Techniques and usages for Kinect style depth cameras A RGBD Project

The RGB-D project is a joint research effort between Intel Labs Seattle and the University of Washington Department of Computer Science & Engineering. The goal of this project is to develop techniques that enable future use cases of depth cameras. Using the Primesense* depth cameras underlying the Kinect * technology, we've been working on areas ranging from 3D modeling of indoor environments to interactive projection systems and object recognition torobotic manipulation and interaction.

Below, you find a list of videos illustrating our work. More detailed technical background can be found in our research areas and at the UW Robotics and State Estimation Lab. Enjoy!

3D Modeling of Indoor Environments

Depth cameras provide a stream of color images and depth per pixel. They can be used to generate 3D maps of indoor environments. Here we show some 3D maps built in our lab. What you see is not the raw data collected by the camera, but a walk through the model generated by our mapping technique. The maps are not complete; they were generated by simply carrying a depth camera through the lab and aligning the data into a globally consistent model using statistical estimation techniques.

3D indoor models could be used to automatically generate architectural drawings, allow virtual flythroughs for real estate, or remodeling and furniture shopping by inserting 3D furniture models into the map.

Interactive Flythrough

Here we show interactive navigation through a 3D model. The visualization can be done in stereoscopic 3D using shutter glasses (just like in the movie Avatar). The system uses a depth camera to control the navigation.

3D Mapping

This video demonstrates the mapping process. Shown is a top view of the 3D map generated by walking with the depth camera through the lab. The system automatically estimates the motion of the camera and detects loop closures, which help it to globally align the camera frames. No external information or sensor is used.

Interactive Mapping

We want to enable novel users to build 3D maps with depth cameras. Here you see our interactive mapping system. The system processes the depth camera data in real time and warns the user when the collected data is not suitable for a map. The approach also suggests areas that have not yet been modeled appropriately.

To know more : Intel Labs RGBD

Libellés :

Computer Graphics,

computer vision,

Kinect,

Tech demo

Monday, January 24, 2011

Kinect Hack to play to Cube 2

This guy develop a hack that allow to take control of the Cube 2 game.

The depth map is simply thresholded and the localization of the blob is used to set the direction of the game character.

The depth map is simply thresholded and the localization of the blob is used to set the direction of the game character.

Libellés :

Computer Graphics,

computer vision,

Human Machine Interface,

Kinect,

Tech demo

Video sequence of the regretted Project Offset

This video show many video of sequence that have been done for the "Project Offset" fantasy FPS... It's a pity that Intel have abandoned the project. The game style was looking so good !

Ted Lister Animation Reel November 2010 from Ted Lister on Vimeo.

Ted Lister Animation Reel November 2010 from Ted Lister on Vimeo.

Thursday, January 20, 2011

Monday, January 17, 2011

Asus Wavi Depth Map and Demo

A short video that show the Depth Map and usage of the Asus Wavi (Kinect clone from Asus)

To see the depth map => (2:55 min) or follow the link

http://www.youtube.com/watch?v=wAXdgXOMl8I&feature=player_embedded#t=175s

To see the depth map => (2:55 min) or follow the link

http://www.youtube.com/watch?v=wAXdgXOMl8I&feature=player_embedded#t=175s

Wednesday, January 12, 2011

Visual Sudoku Solver

Google Goggle purpose now a visual sudoku solver :

I think it use an OCR and Linear Programming to find the Sudoku solution.

It reminds me the Martin Byrod prototype that already do it before google (but not on phones):

Ex : Sudoku solver via LP (Linear Programming) :

http://www.noisette.ch/wiki/index.php/Sudoku + online Demo

I think it use an OCR and Linear Programming to find the Sudoku solution.

It reminds me the Martin Byrod prototype that already do it before google (but not on phones):

Ex : Sudoku solver via LP (Linear Programming) :

http://www.noisette.ch/wiki/index.php/Sudoku + online Demo

Libellés :

Computer Graphics,

computer vision,

Tech demo

Tuesday, January 11, 2011

Kinect 3D reconstruction of interior

The first prototype :

A evolution of the first prototype :

And the navigation in a reconstructed scene :

But reconstruct exterior is not possible with such technology ... Due to the active infrarouge 3D depth sensor.

A evolution of the first prototype :

And the navigation in a reconstructed scene :

But reconstruct exterior is not possible with such technology ... Due to the active infrarouge 3D depth sensor.

Libellés :

Computer Graphics,

computer vision,

Kinect,

Tech demo

Thursday, January 6, 2011

Microsoft Surface 2.0

Only the hardware change, no more camera to detect mutli touch. The screen now embed sensitive pixels.

You want to know more :

http://www.engadget.com/2011/01/05/microsoft-shows-off-next-generation-of-surface-has-per-pixel-to/

You want to know more :

http://www.engadget.com/2011/01/05/microsoft-shows-off-next-generation-of-surface-has-per-pixel-to/

Tuesday, January 4, 2011

Augmented reality ... what's new in 2010 ?

I invite you to go to see this link to see the things that have appeared in 2010 :

http://thomaskcarpenter.com/2011/01/03/augmented-reality-year-in-review-2010/

They missed things... like popcode.info

http://thomaskcarpenter.com/2011/01/03/augmented-reality-year-in-review-2010/

They missed things... like popcode.info

Kinect hardware alternative from Asus

Wavi Xtion. Its the name of the Asus product to make a "clone" of the Kinect Tech for the PC world. It's seems that the tech is based on PRIMESENSE tech.

If you want to know more : Here

If you want to know more : Here

Libellés :

Computer Graphics,

computer vision,

Human Machine Interface,

Kinect

Subscribe to:

Posts (Atom)